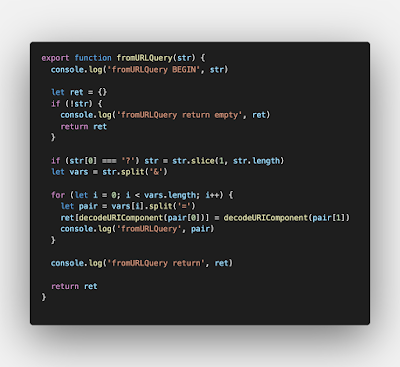

Code is hard. One way to make it easier is to make it pretty. Even simple, short code can become difficult to reason about if it's all scrunched together:

That code is pretty simple, but difficult to reason about. The interspersed debug logging adds a lot of noise and detracts from overall legibility. Just spacing it out helps a lot:

What else can we do? The first declaration of ret is kind of pointless: let's move that closer to where it actually matters, and just return an empty object from the first guard clause. To make sure we print what's actually in the parameter we'll also JSONize it so we don't miss strings that look empty, but aren't. We'll also throw in a quick log wrapper.

The string pre-processing is fine as it is. We can ease our loop readability fairly easily:

Will we increase comprehension by switching to more-modern JS practices?

Kind of a toss-up, but I think so.

Ultimately, however, this is a function that would be better tested either via an actual test, or in the debugger. Logging is fine for long-running testing, but this function is short enough, and clean enough, that we might as well just set some breakpoints within the method, or at the call point.

Bucky Bits

Programming and rants for the "Developmentally Disabled."

Friday, July 17, 2020

Sunday, January 20, 2019

Snowflake Sociology

"Snowflake" isn't a term I enjoy using: it's derogatory, demeaning, and can be used as a dismissive approach to ideas we don't like. Until you're snowflaked, it's an easy thing to not like.

I was finally snowflaked awhile back. I sat on it for some time, but given today's general political and conversational climate, I wanted to discuss the experience. Someone asked a Stack Overflow question I thought was very clear in its intent, roughly:

What? Are all method names verbs? (No.) Are all method names in a DSL verbs? Most assuredly not (attr_accessor is not a verb, it's a declarative assertion of intent). To me, it was crystal clear what was being asked (and apparently it was equally clear to the two people that answered it).

I was accused of being condescending and misogynistic. Many people have accused me of being condescending; when you're far enough along on the spectrum to be diagnosable, it happens. Not proud of it, but it happens. Misogynistic?! To anybody that actually knows me the idea is laughable. I was insulted, and badly. It hurt. It was embarrassing.

Could I have expressed my opinion that I thought the question was obvious in a better way? Possibly (and probably, but honestly, it wouldn't even register with most people). Could I have said something like "It seems like they're asking for a DSL; given the provided examples I think the intention is to treat the function names like mathematical operators." I could have just said that and let it be.

I didn't: I said it was "obvious" what was being asked. That was the mistake. Why? Because when something isn't "obvious" to someone, and it is to someone else, it can make the other person feel stupid. This can escalate quickly (as it did).

I have a different view of things: if something is obvious to someone else, and not to me, it means I have a knowledge gap. I don't like knowledge gaps. I might feel shame: one of my traits is that I know things. It partially defines who I am. When I don't know something, it diminishes who I am in some way. It's a non-functional emotional behavior (that I'm working on).

That I didn't know something doesn't trigger an external redirection of that shame, however: I don't take out my (perceived) shortcomings on the person that brought it up, at least not in a public forum. And I certainly don't accuse other people of being biased in any way (if they're being a jerk I'll call them on that, though).

Here's the thing: nobody likes to be wrong. People like to be told they're wrong even less. Some people lash out when they're told they're wrong, or that something might be obvious to somebody else.

There's always a balance. Online intent is very easy to mis-judge. The word "obvious" can be a trigger.

Lesson learned.

I was finally snowflaked awhile back. I sat on it for some time, but given today's general political and conversational climate, I wanted to discuss the experience. Someone asked a Stack Overflow question I thought was very clear in its intent, roughly:

They want a math DSL, and they provided examples:I can't seem to wrap my head around the logic for writing a function that looks something likeeight(times(five))in JavaScript.

I mean... this is obvious. To me. Not to Ryan and Amy. They asked for clarification, I told them both it seemed very straight-forward what was being asked. Their hangup (at first)? "Eight isn't a verb, so it can't have behavior."

eight(times(five))should return 40three(plus(two))should return 5

What? Are all method names verbs? (No.) Are all method names in a DSL verbs? Most assuredly not (attr_accessor is not a verb, it's a declarative assertion of intent). To me, it was crystal clear what was being asked (and apparently it was equally clear to the two people that answered it).

I was accused of being condescending and misogynistic. Many people have accused me of being condescending; when you're far enough along on the spectrum to be diagnosable, it happens. Not proud of it, but it happens. Misogynistic?! To anybody that actually knows me the idea is laughable. I was insulted, and badly. It hurt. It was embarrassing.

Could I have expressed my opinion that I thought the question was obvious in a better way? Possibly (and probably, but honestly, it wouldn't even register with most people). Could I have said something like "It seems like they're asking for a DSL; given the provided examples I think the intention is to treat the function names like mathematical operators." I could have just said that and let it be.

I didn't: I said it was "obvious" what was being asked. That was the mistake. Why? Because when something isn't "obvious" to someone, and it is to someone else, it can make the other person feel stupid. This can escalate quickly (as it did).

I have a different view of things: if something is obvious to someone else, and not to me, it means I have a knowledge gap. I don't like knowledge gaps. I might feel shame: one of my traits is that I know things. It partially defines who I am. When I don't know something, it diminishes who I am in some way. It's a non-functional emotional behavior (that I'm working on).

That I didn't know something doesn't trigger an external redirection of that shame, however: I don't take out my (perceived) shortcomings on the person that brought it up, at least not in a public forum. And I certainly don't accuse other people of being biased in any way (if they're being a jerk I'll call them on that, though).

Here's the thing: nobody likes to be wrong. People like to be told they're wrong even less. Some people lash out when they're told they're wrong, or that something might be obvious to somebody else.

There's always a balance. Online intent is very easy to mis-judge. The word "obvious" can be a trigger.

Lesson learned.

Back yet again!

As part of a blogging/writing blitz I'm back on this platform as well as others.

I'll be blogging here, as well as one of the bloggers at the Maker's End Blog, discussing a wide variety of topics, generally related to technology, but occasionally stretching far afield into general Making, society, crafting, and so on.

A few of the upcoming topics will include:

* JavaScript

* Embedded Systems

* Projects/Builds

* Technology Landscape

* Automating creative workflows

* Refactoring (with specific examples, usually from Stack Overflow)

* Code Quality Issues and Metrics

* IoT

* Integrations

As always, I'm open to suggestions for topics.

I just built the Pimoroni Keybow keyboard; check out the build and review (and build video). It's a pretty slick 12-key auxiliary keyboard powered by the Raspberry Pi Zero WH with an RGB LED under each key. It acts like a standard keyboard (USB HID) and I'm pretty excited to experiment with it.

Also, stay tuned for excerpts from an upcoming Maker's End book series, the Inspiration Series, which will cover a number of embedded platforms including the Arduino, various Adafruit boards, Sparkfun QWIIC boards and components, and some DFRobot boards and parts. First out of the gate will be the Arduino Inspiration book, a project-based Arduino tutorial, with a goal of inspiring relative newcomers to the world of artistic expression using technology.

I'll be blogging here, as well as one of the bloggers at the Maker's End Blog, discussing a wide variety of topics, generally related to technology, but occasionally stretching far afield into general Making, society, crafting, and so on.

A few of the upcoming topics will include:

* JavaScript

* Embedded Systems

* Projects/Builds

* Technology Landscape

* Automating creative workflows

* Refactoring (with specific examples, usually from Stack Overflow)

* Code Quality Issues and Metrics

* IoT

* Integrations

As always, I'm open to suggestions for topics.

I just built the Pimoroni Keybow keyboard; check out the build and review (and build video). It's a pretty slick 12-key auxiliary keyboard powered by the Raspberry Pi Zero WH with an RGB LED under each key. It acts like a standard keyboard (USB HID) and I'm pretty excited to experiment with it.

Also, stay tuned for excerpts from an upcoming Maker's End book series, the Inspiration Series, which will cover a number of embedded platforms including the Arduino, various Adafruit boards, Sparkfun QWIIC boards and components, and some DFRobot boards and parts. First out of the gate will be the Arduino Inspiration book, a project-based Arduino tutorial, with a goal of inspiring relative newcomers to the world of artistic expression using technology.

Friday, October 20, 2017

Cura 3 on OS X Crashes on Startup

This one was easy to fix, although I'm not sure what the consequences will be.

On startup the new version of Cura would start to open (evidenced by Activity Monitor process) then close. I decided to delete my existing Cura app data since (a) need to recalibrate the printer anyway, (b) getting an additional new printer anyway, and (c) didn't know what else to try.

Navigate to ~/Library/Application Support/ and look for anything Cura-related. In my case, there was a Cura folder with a directory for the two previous versions I'd had installed.

Go ahead and delete those, you didn't want them anyway.

My Cura 3.0 now starts just peachy.

On startup the new version of Cura would start to open (evidenced by Activity Monitor process) then close. I decided to delete my existing Cura app data since (a) need to recalibrate the printer anyway, (b) getting an additional new printer anyway, and (c) didn't know what else to try.

Navigate to ~/Library/Application Support/ and look for anything Cura-related. In my case, there was a Cura folder with a directory for the two previous versions I'd had installed.

Go ahead and delete those, you didn't want them anyway.

My Cura 3.0 now starts just peachy.

Coming Soon

I have a number of projects, both software- and hardware-related, coming down the pike right quick now. Think Raspberry Pi 0W, Raspberry Pi 3, NodeMCU, IoT, Elixir/Phoenix, Kafka, AWS, Nerf gun mods/hacks, and so on. I'm open for business in a new workspace, with new tools, and new mindset.

Let the games begin.

Tuesday, August 11, 2015

Pirate Stack

LAMP? MEAN? No, mateys, PIRATE STACK!

(A)RRR(GH): React, Relay, RethinkDB.

The stack is mine. I win the internet.

(A)RRR(GH): React, Relay, RethinkDB.

The stack is mine. I win the internet.

Wednesday, August 05, 2015

Styling Atom Editor UI Elements

I'm in the process of switching to Atom as my daily text editor (from Sublime Text 3) and needed to have my UI element font much smaller. Doing this in Atom is blissfully easy, just edit your styles.less file and you're done. The trick is understanding which elements you want to style.

Most elements I needed re-sized are easy:

The styles.less is accessible directly in your $HOME/.atom directory, or through Atom itself in Settings -> Themes.

Most elements I needed re-sized are easy:

The styles.less is accessible directly in your $HOME/.atom directory, or through Atom itself in Settings -> Themes.

Subscribe to:

Posts (Atom)